Live Video Knowledge III: Playback and Playback Completion

Preface

This article mainly records the knowledge related to decoding and rendering on the playback side after pulling the stream.

Articles in the same series:

- Live video knowledge I: Data Acquisition and Encoding

- Live video knowledge II: Push and Pull Streams, and Server-Side Processing

- Live video knowledge III: Playback and Playback Completion

- Live video knowledge IV: Live Demo – RTMP Push and HTTP-FLV Pull Streams

1 Playback Flow

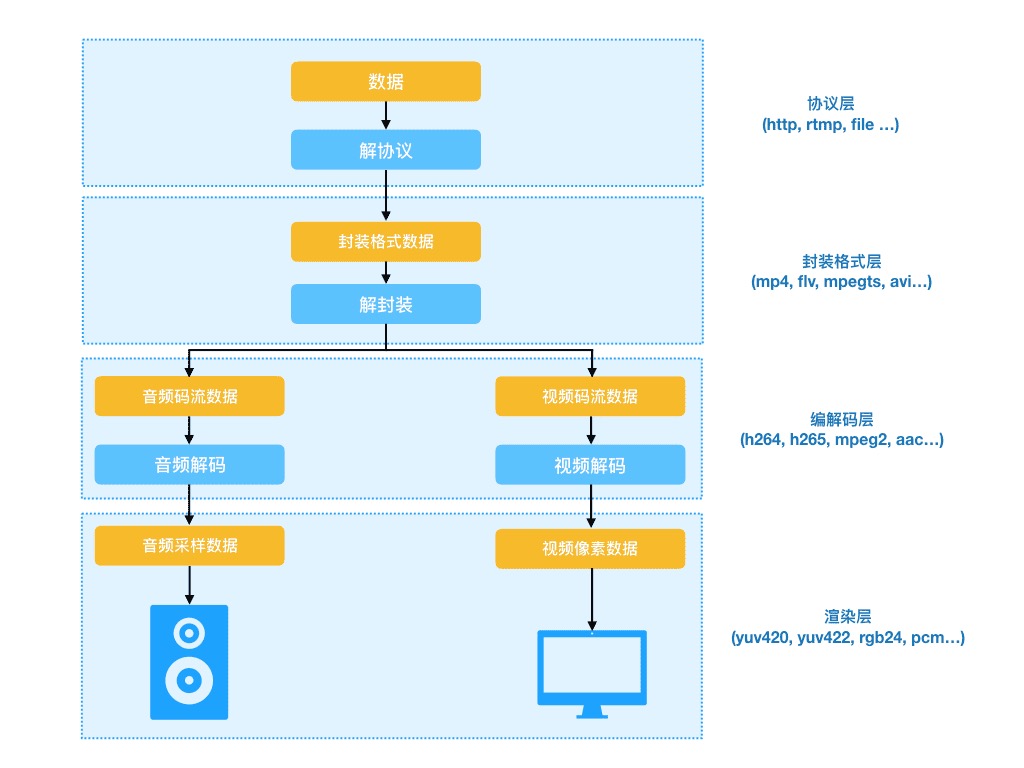

After getting the stream data, a traditional client player plays a video stream after the following steps:

1.1 Decapsulation

Before starting the playback, it is necessary to separate the images, sounds, subtitles (which may not exist), etc. from the pulled stream data according to the encapsulated format (e.g., mp4, etc.), and this separation behavior and process is demux.

The basic streams such as images, sounds, subtitles, etc. are obtained after decapsulation, and then the basic streams can be decoded by a decoder.

1.2 Decoding

After decapsulation, the separated raw streams need to be decoded according to the coding format of the video (e.g., H.264, H.265, etc.) to generate data that can be played by audio and video players.

- The data obtained by audio decoding is PCM sample data.

- The data obtained by video decoding is a YUV or RGB image data.

1.3 Rendering

Rendering refers to taking the decoded data and playing it on the pc hardware (monitor, speakers). The module responsible for rendering is called a renderer, and web players are usually embedded using the video tag.